- Blog

- Formation allplan

- Nba 2k17 servers coming back

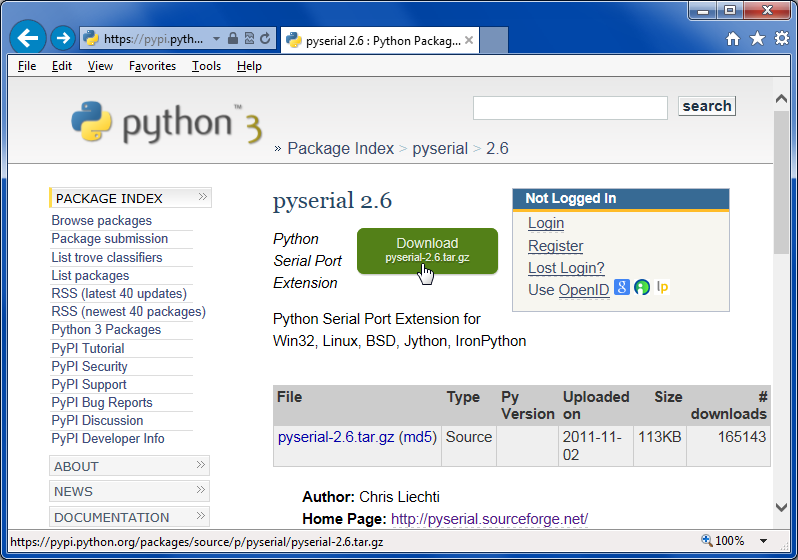

- Python download and unzip file

- How to increase download speed windows 10

- Download video from website

- Iriscan express 3 driver for windows 10

- Launcher kindle fire 5

- Express zip registration code generator

- Stata MP 16-0 Serial Number

- Money in critical ops pc hack

- Doodlebob and the magic pencil online

- Music paradise pro mp3 download

- App store download button

- Fluidsim 5 crack deutsch

- Internet download speed test

- Project x love potion disaster cream gallery mode

- Download facebook video call messenger

- Web dynpro select options

- Fabfilter pro q 3 license key free reddit

- Xforce keygen autocad 2018 64 bit free download windows 10

- Cities skylines xbox one traffic manager

- Genshin impact download steam

- Fxhome hitfilm pro 2017 v5-0-6511-32872

- Download chrome windows xp

- 32bit firefox download

- Can i download spotify music to my apple watch

- Tevion 8 In 1 Card Reader Driver Win 7

filepaths getallfilepaths(directory) Here we pass the directory to be zipped to the getallfilepaths() function and obtain a list containing all file paths. In the end, we return all the file paths. With open(out_file_path, 'w') as outfile: In each iteration, all files present in that directory are appended to a list called filepaths. If you don't use any of these options, Curl will. If you want the uploaded file to be saved under the same name as in the URL, use the -remote-name or -O command line option.

It works around an event loop that waits for an event to occur and then reacts to that event. You can use the asyncio module to handle system events. This option allows you to save the downloaded file to a local drive under the specified name. Finally, download the file by using the downloadfile method and pass in the variables: service.Bucket(bucket).downloadfile(filename, downloadedfile) Using asyncio.

Print('Downloading SEED Database from: '.format(url))Ĭompressed_file = StringIO.StringIO(response.read())ĭecompressed_file = gzip.GzipFile(fileobj=compressed_file) To download a file with Curl, use the -output or -o command-line option. Here's what worked for me (adapted from here): import urllib2 I've found this question while searching for methods to download and unzip a gzip file from an URL but I didn't manage to make the accepted answer work in Python 2.7. Developed and maintained by the Python community, for the Python community.

#Python download and unzip file download zip#

Just gzip.GzipFile(fileobj=handle) and you'll be on your way - in other words, it's not really true that "the Gzip library only accepts filenames as arguments and not handles", you just have to use the fileobj= named argument. It will download zip file and extract it to the specified folder.